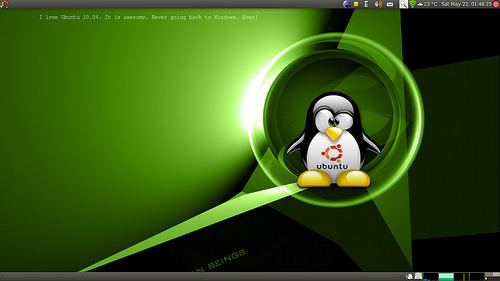

I worked on this cool hack to dynamically show Twitter messages embedded into the desktop background. The basic idea is to have some dynamic text (which could be fetched from the web) embedded in an SVG image, which is set as the desktop background. The SVG image contains the actual wallpaper that we intend to use.

[ad name=”blog-post-ad-wide”]

Here are the steps:

- We first start by creating an SVG template file called wall-tmpl.svg with the following contents and saving it in the Wallpapers directory (let’s say it is ~/Theme/Wallpapers):

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE svg PUBLIC "-//W3C//DTD SVG 1.0//EN" "http://www.w3.org/TR/2001/REC-SVG-20010904/DTD/svg10.dtd" [

<!ENTITY ns_imrep "http://ns.adobe.com/ImageReplacement/1.0/">

<!ENTITY ns_svg "http://www.w3.org/2000/svg">

<!ENTITY ns_xlink "http://www.w3.org/1999/xlink">

]>

<svg xmlns="http://www.w3.org/2000/svg" xmlns:xlink="http://www.w3.org/1999/xlink" width="1280" height="1024" viewBox="0 0 1280 1024" overflow="visible" enable-backgroun

d="new 0 0 132.72 127.219" xml:space="preserve">

<image xlink:href="~/Theme/Wallpapers/-your-favorite-wall-paper-" x="0" y="0" width="1280" height="1024"/>

<text x="100" y="200" fill="white" font-family="Nimbus Mono L" font-size="14" kerning="2">%text</text>

</svg>

- Next we create a script to fetch the most recent Twitter message and then embedding it in the image. The script is called change-wallpaper and is placed in the bin directory. It has the following:

text=`python -c "import urllib;print eval(urllib.urlopen('http://search.twitter.com/search.json?q=ubuntu&lang=en').read().replace('false', 'False').replace('true', 'True

').replace('null', 'None'))['results'][0]['text'].replace('\!','').replace('/','\/')"`

cat ~/Theme/Wallpapers/wall-tmpl.svg | sed "s/%text/$text/g" > ~/Theme/Wallpapers/wall.svg

- We then add the following entry to crontab to fetch Twitter messages every minute:

# m h dom mon dow command

* * * * * ~/bin/change-wallpaper

- Run the script once, it will create a file called wall.svg in your Wallpapers directory. Set this as your desktop background and watch the background change every minute!

You could get very creative with this. You could have your calendar reminders embedded directly into your desktop background or you could have dynamically fetched background images with your own random fortune quote. The possibilities are enormous!